Conduktor Gateway

Route. Protect. Transform.

A Kafka proxy that gives platform teams the controls they've always wanted without asking developers to change a single line of code.

Augment Your Infrastructure.

Kafka is powerful, but it wasn't built for governance, security, or policy enforcement. A Kafka Gateway closes that gap, so platform teams can tailor Kafka to their requirements, not the other way around.

For Platform Teams Scale Kafka across teams without multiplying infrastructure or complexity.

For Security & Compliance Enforce encryption and data policies at the infrastructure layer, not in application code.

What Is Conduktor Gateway?

Conduktor Gateway is a Kafka proxy that sits between your client applications and your Kafka brokers. It gives platform teams a single control point for security, routing, data quality, and multi-tenancy without changing application code.

Native Kafka protocol

Gateway speaks the Kafka wire protocol. Clients connect to it exactly like a broker. Just update bootstrap.servers and the proxy handles authentication, encryption, routing, and observability transparently.

Infrastructure-layer control

Add capabilities that aren't available natively, like field-level encryption, virtual clusters, SQL-based topic views, and chaos testing, without asking every application team to implement them individually.

Zero application changes

Every produce and consume request passes through the proxy. Policies are applied at the infrastructure layer, so platform teams can enforce standards across all teams from one place.

Just need to reach Kafka across networks? Try our free Kafka proxy. It connects clients to clusters sitting in other VPCs, clouds, or private networks, with no changes to your brokers or credentials.

Gateway Use Cases

Routing, Multi-tenancy & Performance

Eliminate network barriers, deliver isolated Kafka environments without separate clusters, and optimize throughput at the proxy layer.

Connect without code changes

Remove network barriers to Kafka connectivity with Kafka proxy routing, centralize authentication through OIDC integration, and enforce fine-grained access control beyond what native ACLs provide.

- Cross-network connectivity routing client connections through Gateway without client configuration changes

- Centralized authentication integrating with external OIDC systems for unified access control across hybrid deployments

- Application audit trails tracking every action across Kafka resources to meet SOC2, ISO 27001, and GDPR requirements

# Gateway Configuration

gateway:

environment:

GATEWAY_SECURITY_MODE: GATEWAY_MANAGED

GATEWAY_SECURITY_PROTOCOL: SASL_PLAINTEXT

# OIDC Provider Settings

GATEWAY_OAUTH_JWKS_URL: "https://your-idp.com/.well-known/jwks.json"

GATEWAY_OAUTH_EXPECTED_ISSUER: "https://your-idp.com"

GATEWAY_OAUTH_EXPECTED_AUDIENCES: "kafka-gateway"

GATEWAY_OAUTH_SUB_CLAIM_NAME: "sub"

# Map OIDC identities to Gateway Service Accounts

apiVersion: gateway/v2

kind: GatewayServiceAccount

metadata:

name: my-application

spec:

type: EXTERNAL

externalNames:

- "oauth-subject-id-from-token" # Value from 'sub' claim in JWT// Audit log event

{

"id": "f47ac10b-58cc-4372-a567-0e02b2c3d479",

"type": "APIKEYS_REQUEST",

"time": "2024-10-15T14:30:45.123Z",

"source": "//kafka/cluster/production",

"authenticationPrincipal": "tenant-acme",

"userName": "order-service",

"connection": {

"localAddress": "172.17.0.2:6969",

"remoteAddress": "192.168.1.42:52341"

},

"eventData": {

"apiKeys": "PRODUCE",

"topics": [

{ "name": "orders", "partition": 0 },

{ "name": "orders", "partition": 1 }

]

},

"specVersion": "0.1.0"

}Decouple tenants from clusters

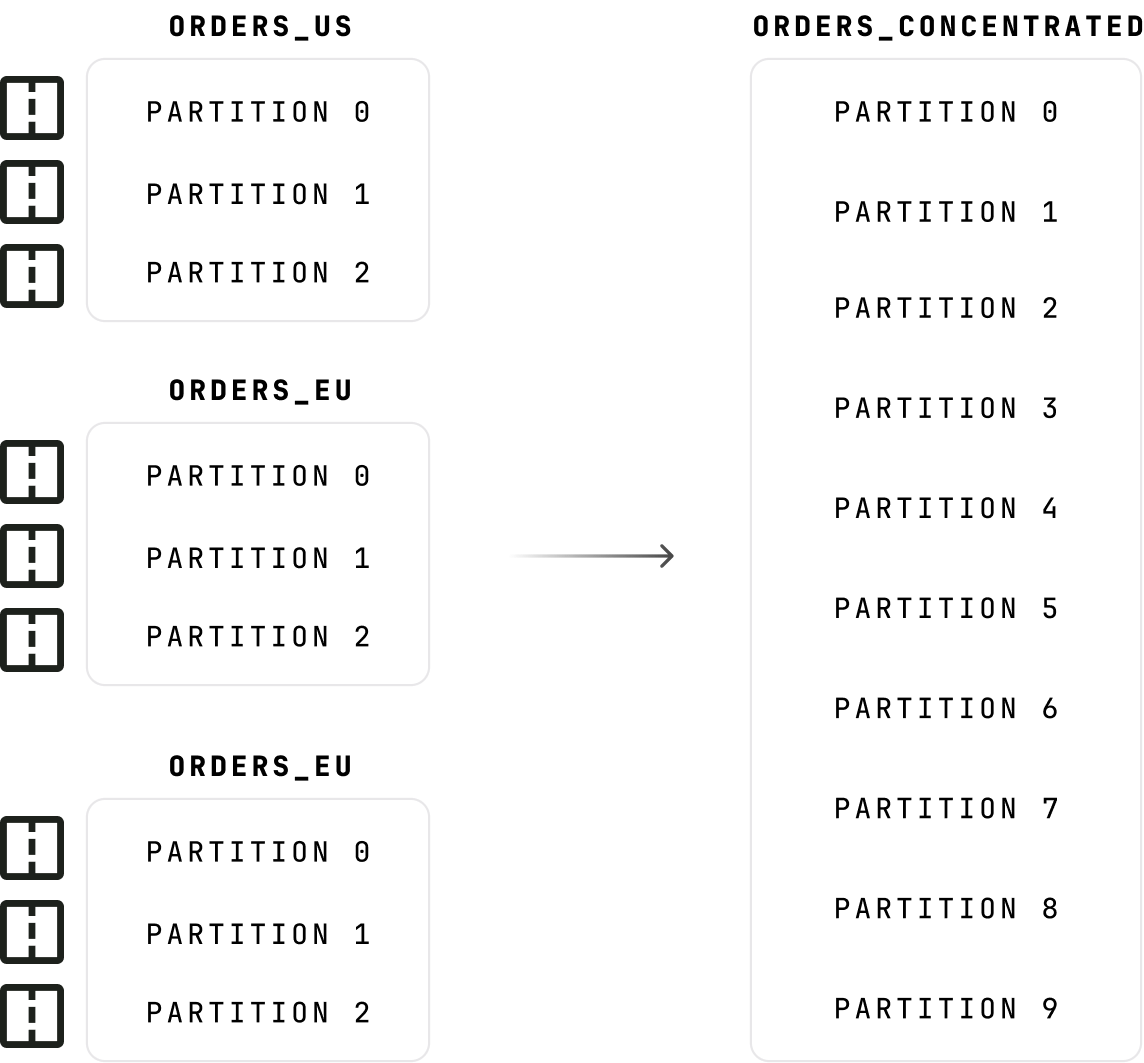

Deliver isolated Kafka environments to multiple teams without the cost of separate clusters, eliminate topic naming chaos, and dramatically reduce partition counts.

- Virtual clusters deploying multiple logical Kafka clusters on a single physical cluster with isolated namespaces and ACL boundaries

- Topic concentration consolidating low-volume topics into shared physical topics, reducing partition count by 90%+

- Topic aliases accessing topics with aliased names to rename without client changes or hide internal naming

apiVersion: gateway/v2

kind: VirtualCluster

metadata:

name: payments-team

spec:

type: Standard

aclEnabled: true

superUsers:

- payments-admin

apiVersion: gateway/v2

kind: VirtualCluster

metadata:

name: orders-team

spec:

type: Standard

aclEnabled: true

superUsers:

- orders-admin

apiVersion: gateway/v2

kind: AliasTopic

metadata:

name: customers

vCluster: partner-team

spec:

physicalName: internal-crm-customers

apiVersion: gateway/v2

kind: AliasTopic

metadata:

name: orders

vCluster: partner-team

spec:

physicalName: internal-billing-ordersHandle load efficiently

Reduce broker load from repetitive reads, handle oversized payloads without impacting cluster performance, and provide filtered topic views without stream processing complexity.

- SQL topics creating read-only virtual topics with SQL-based filtering and projections at the gateway layer

- Caching serving frequently-accessed messages from Gateway cache to reduce broker load for high-frequency reads

- Large message offload automatically offloading messages exceeding size thresholds to S3 or Azure Blob Storage

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: sql-filter-adults

spec:

pluginClass: io.conduktor.gateway.interceptor.VirtualSqlTopicPlugin

priority: 100

config:

virtualTopic: customers-adult

statement: |

SELECT firstName, lastName, email, country

FROM customers

WHERE age >= 18 AND country = 'US'

schemaRegistryConfig:

host: http://schema-registry:8081apiVersion: gateway/v2

kind: Interceptor

metadata:

name: cache-high-traffic-topics

spec:

pluginClass: io.conduktor.gateway.interceptor.CacheInterceptorPlugin

priority: 100

config:

topic: "events.*"

cacheConfig:

type: IN_MEMORY

inMemConfig:

cacheSize: 1000

expireTimeMs: 60000apiVersion: gateway/v2

kind: Interceptor

metadata:

name: offload-large-messages-s3

spec:

pluginClass: io.conduktor.gateway.interceptor.LargeMessageHandlingPlugin

priority: 100

config:

topic: "media.*"

minimumSizeInBytes: 1048576

localDiskDirectory: /tmp/kafka-offload

s3Config:

bucketName: kafka-large-messages

region: us-east-1Govern your schema registry

Add authentication, access control, and observability to your schema registry with a drop-in Kafka proxy that enforces governance on every operation.

- JWT/OIDC authentication validating tokens from your existing identity provider before any request reaches the registry

- Per-subject access controls enforcing Read and Write permissions with exact match, wildcard, and prefix matching

- Full observability tracing every operation with OpenTelemetry, metering with Prometheus, and logging in structured JSON

# docker-compose.yml

schema-registry-proxy:

ports:

- "8080:8080"

- "9464:9464" # Prometheus metrics

environment:

# Schema Registry backend

CONFLUENT_SCHEMA_REGISTRY_URL: http://schema-registry:8081

# JWT authentication via OIDC provider

AUTH_PROVIDER: jwt

JWT_JWKS_URL: http://keycloak:8080/realms/kafka/protocol/openid-connect/certs

JWT_SUBJECT_CLAIM_NAME: sub

# Dynamic permissions via Kafka

AUTH_USE_REACTIVE_CONFIG: true

KAFKA_BOOTSTRAP_SERVERS: kafka:9092

# OpenTelemetry tracing

OTEL_EXPORTER_OTLP_ENDPOINT: http://otel-collector:4317# Permission format (Kafka topic: _conduktor_srp_commands)

# Key: service account name

# Value: permission entries

# Admin — full access to all subjects

{ "permissions": [

{ "subject": "*",

"permissionType": "WRITE" }

]}

# Payments team — prefix matching

{ "permissions": [

{ "subject": "payments-*",

"permissionType": "WRITE" },

{ "subject": "orders-*",

"permissionType": "READ" }

]}

# Analytics — read-only, all subjects

{ "permissions": [

{ "subject": "*",

"permissionType": "READ" }

]}# Get a JWT token (client credentials flow)

TOKEN=$(curl -s -X POST \

http://keycloak:8080/realms/kafka/protocol/openid-connect/token \

-d "grant_type=client_credentials" \

-d "client_id=payments-service" \

-d "client_secret=secret" | jq -r '.access_token')

# Register a schema through the proxy

curl -X POST http://localhost:8080/subjects/payments-value/versions \

-H "Authorization: Bearer $TOKEN" \

-H "Content-Type: application/json" \

-d '{"schema": "{\"type\":\"record\",\"name\":\"Payment\",\"fields\":[{\"name\":\"id\",\"type\":\"string\"}]}"}'

# Response: {"id": 1}

# Metrics: schema_registry_requests_total +1

# Trace: full span in Jaeger/OTLPResilience & Disaster Recovery

Prevent developer misconfigurations from destabilizing your platform, maintain operational continuity during failures, and validate fault tolerance through controlled testing.

Prevent misconfigurations

Eliminate noisy-neighbor problems and configuration drift with Kafka Gateway safeguards that enforce naming conventions, retention limits, quotas, and replication rules before issues reach production.

- Configuration policies enforcing topic naming conventions, retention limits, replication rules, and schema requirements at provision and runtime

- Traffic control & quotas enforcing bandwidth and rate limits per tenant or cluster to prevent noisy neighbors

- Producer & consumer policies ensuring acks, compression, and idempotence requirements across all producers and controlling fetch behavior on consumers

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: topic-governance-policy

spec:

pluginClass: io.conduktor.gateway.interceptor.safeguard.CreateTopicPolicyPlugin

priority: 100

config:

namingConvention:

value: "^[a-z]+-[a-z]+-[a-z]+$"

action: BLOCK

numPartition:

min: 3

max: 12

action: OVERRIDE

overrideValue: 6

replicationFactor:

min: 3

max: 3

action: BLOCK

retentionMs:

min: 86400000

max: 604800000

action: OVERRIDE

overrideValue: 259200000apiVersion: gateway/v2

kind: Interceptor

metadata:

name: producer-rate-limit

scope:

vCluster: payments-team

spec:

pluginClass: io.conduktor.gateway.interceptor.safeguard.ProducerRateLimitingPolicyPlugin

priority: 100

config:

maximumBytesPerSecond: 10485760

action: BLOCK

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: consumer-rate-limit

scope:

vCluster: payments-team

spec:

pluginClass: io.conduktor.gateway.interceptor.safeguard.ConsumerRateLimitingPolicyPlugin

priority: 100

config:

maximumBytesPerSecond: 52428800Migrate and recover with confidence

Maintain operational continuity during cluster migrations and failover with transparent Kafka proxy redirection, and validate fault tolerance through controlled chaos testing.

- Cluster switching & failover migrating applications between clusters or switching to failover infrastructure without application config changes or team coordination

- Chaos engineering injecting latency, protocol errors, and message corruption via Gateway interceptors to test resilience without disrupting production

# Gateway cluster configuration

config:

main:

bootstrap.servers: kafka-primary:9092

security.protocol: SASL_SSL

sasl.mechanism: PLAIN

failover:

bootstrap.servers: kafka-secondary:9092

gateway.roles: failover

# Switch from main → failover

curl -X POST 'http://localhost:8888/gateway/v2/cluster-switching' \

-H 'Content-Type: application/json' \

-d '{"fromPhysicalCluster": "main", "toPhysicalCluster": "failover"}'# Chaos testing interceptor

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: chaos-broken-broker

spec:

pluginClass: io.conduktor.gateway.interceptor.chaos.SimulateBrokenBrokersPlugin

priority: 100

config:

rateInPercent: 100

errorMap:

FETCH: UNKNOWN_SERVER_ERROR

PRODUCE: CORRUPT_MESSAGEData Protection

Control message metadata, enforce data quality, protect sensitive fields, and verify message integrity at the proxy layer.

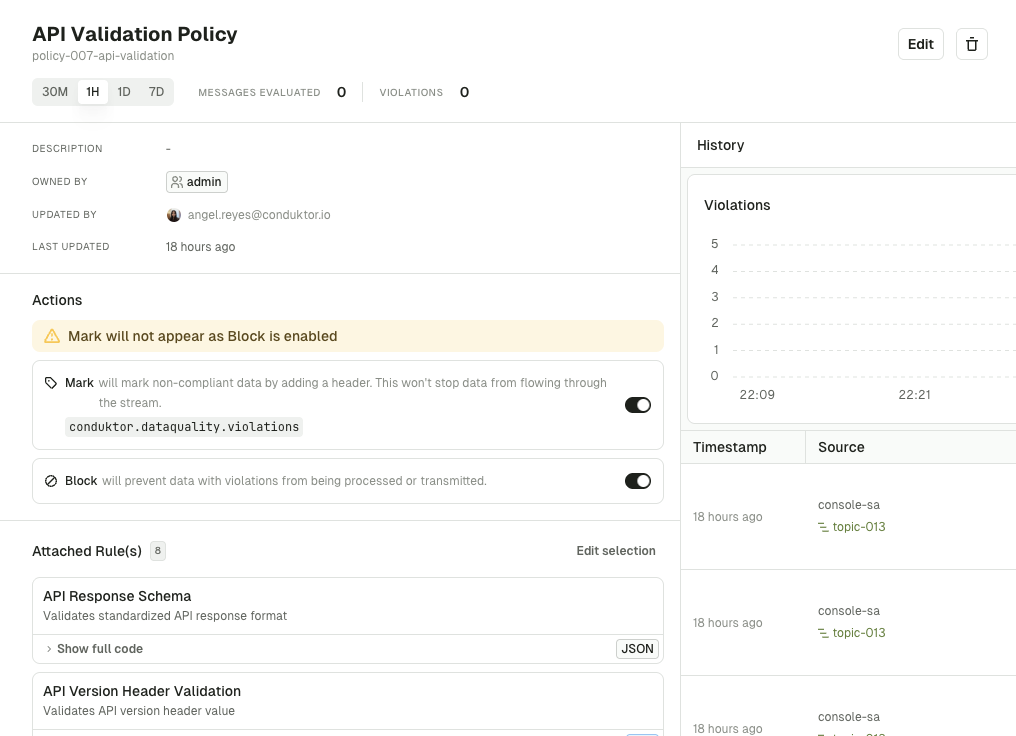

Stop bad data at the source

Stop malformed and non-compliant data at the source rather than discovering quality issues after they've broken downstream systems.

- Data quality detection evaluating data flowing through Gateway for compliance with validation rules and quality standards

- Data quality enforcement blocking, redirecting, or marking messages that violate quality rules before they reach Kafka

{

"name": "myDataQualityProducerPlugin",

"pluginClass": "io.conduktor.gateway.interceptor.safeguard.DataQualityProducerPlugin",

"priority": 100,

"config": {

"statement": "SELECT x FROM orders WHERE amount_cents > 0 AND amount_cents < 1000000",

"schemaRegistryConfig": {

"host": "http://schema-registry:8081"

},

"action": "BLOCK_WHOLE_BATCH",

"deadLetterTopic": "dead-letter-topic",

"addErrorHeader": false

}

}

Encrypt consistently across apps

Ensure consistent encryption across all applications with centralized KMS enforcement at the Kafka Gateway layer, and enable analytics on sensitive data through field-level tokenization.

- Centralized encryption enforcing encryption with a standardized KMS connection to prevent config drift and enable crypto-shredding for GDPR compliance

- Multi-cloud KMS integration connecting with Vault, AWS KMS, Azure Key Vault, GCP KMS, or Fortanix for PCI-DSS, HIPAA, and GDPR compliance

- Field-level encryption encrypting sensitive fields with schema-based targeting while keeping non-sensitive data readable

- Tokenization replacing sensitive values with tokens while preserving format for analytics

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: encrypt-pii-fields

spec:

pluginClass: io.conduktor.gateway.interceptor.EncryptPlugin

priority: 100

config:

topic: "customers.*"

recordValue:

fields:

- fieldName: ssn

keySecretId: "vault-kms://vault:8200/transit/keys/pii-key"

algorithm: AES256_GCM

- fieldName: creditCard.number

keySecretId: "vault-kms://vault:8200/transit/keys/payment-key"

algorithm: AES256_GCM

- fieldName: email

keySecretId: "in-memory-kms://email-key"

algorithm: AES128_GCM

kmsConfig:

vault:

uri: http://vault:8200

type: TOKEN

token: ${VAULT_TOKEN}apiVersion: gateway/v2

kind: Interceptor

metadata:

name: encrypt-with-kms

spec:

pluginClass: io.conduktor.gateway.interceptor.EncryptPlugin

priority: 100

config:

topic: ".*"

recordValue:

fields:

- fieldName: ssn

keySecretId: "vault-kms://..."

- fieldName: payment.cardNumber

keySecretId: "aws-kms://..."

- fieldName: healthRecord

keySecretId: "azure-kms://..."

kmsConfig:

vault:

uri: http://vault:8200

type: APP_ROLE

aws:

basicCredentials:

accessKey: ${AWS_ACCESS_KEY}

azure:

tokenCredential:

tenantId: ${AZURE_TENANT_ID}Verify message integrity

Verify message authenticity and detect tampering to meet audit and chain-of-custody requirements.

- Cryptographic signing signing messages at the gateway to detect tampering and verify message origin for compliance requirements

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: sign-messages

spec:

pluginClass: io.conduktor.gateway.interceptor.integrity.ProduceIntegrityPolicyPlugin

priority: 100

config:

topic: "transactions.*"

secretKeyUri: "secret/data/signing-key#key"

keyProviderConfig:

vault:

uri: https://vault:8200

type: TOKEN

token: ${VAULT_TOKEN}Control message metadata

Ensure that message metadata is consistent with enterprise standards without requiring application changes.

- Header & message handling injecting, modifying, or removing message headers to transform and control metadata centrally

apiVersion: gateway/v2

kind: Interceptor

metadata:

name: inject-tracking-headers

spec:

pluginClass: io.conduktor.gateway.interceptor.DynamicHeaderInjectionPlugin

priority: 100

config:

topic: "orders.*"

headers:

X-Source-Region: "${GATEWAY_REGION}"

X-Gateway-Timestamp: "${timestamp}"

overrideIfExists: trueapiVersion: gateway/v2

kind: Interceptor

metadata:

name: remove-internal-headers

spec:

pluginClass: io.conduktor.gateway.interceptor.safeguard.MessageHeaderRemovalPlugin

priority: 100

config:

topic: ".*"

headerKeyRegex: "^X-Internal-.*"External Data Sharing

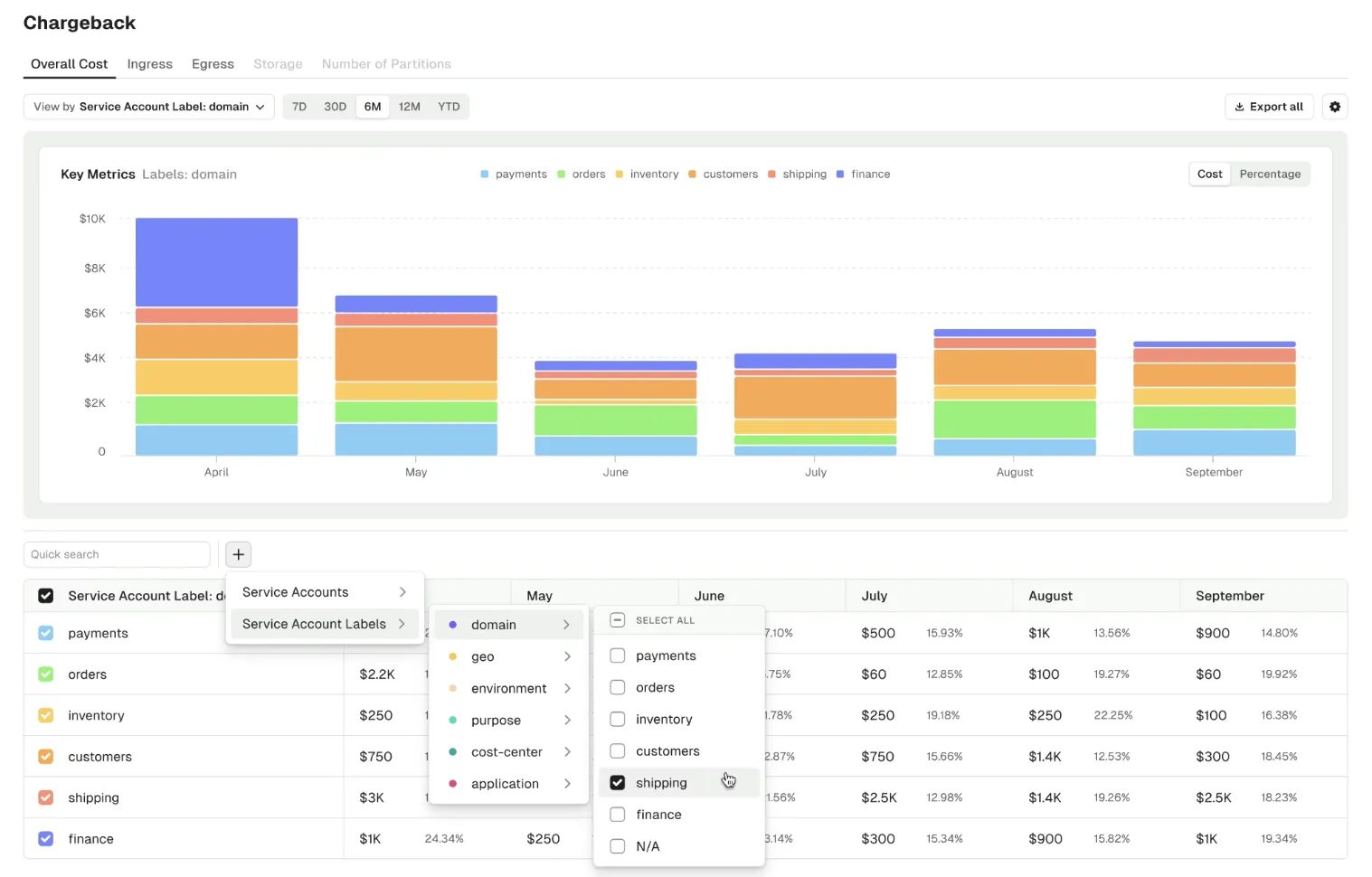

Share Kafka data with external partners without overexposing your infrastructure, adapt data formats for partner consumption, and track usage for cost allocation.

Share data securely

Share Kafka data with external partners without overexposing your infrastructure, adapt data formats without dual-write patterns, and track usage for billing.

- Partner virtual clusters provisioning isolated virtual clusters with topic mappings, rate limits, and service accounts for third parties

- Partner topic views creating logical topic views with aliasing for partner-specific access without duplicating infrastructure

- Data format transformations transforming message formats using SQL expressions for partner-specific schemas

- Partner chargeback tracking consumption metrics by partner for usage attribution and cost allocation

apiVersion: gateway/v2

kind: VirtualCluster

metadata:

name: partner-a

spec:

type: Partner

aclEnabled: true

superUsers:

- partner-admin

apiVersion: gateway/v2

kind: GatewayServiceAccount

metadata:

name: partner-admin

vCluster: partner-a

spec:

type: LOCAL

apiVersion: gateway/v2

kind: AliasTopic

metadata:

name: orders

vCluster: partner-a

spec:

physicalName: internal-ordersKafka Proxy Comparison

How does Conduktor Gateway stack up?

Compare Conduktor Gateway against the field, feature by feature.

Confluent Gateway Kong Gravitee Aklivity Zilla Kroxylicious

Measurable Impact

Real results from platform teams using Gateway.

European airline moved to Confluent Cloud in 9 months with zero downtime.

Payment processor achieved MasterCard and VISA certification with Gateway encryption.

FlixBus scaled multi-tenancy without multiplying infrastructure.

Encryption, routing, and policies applied at the proxy layer, not in applications.

Read more customer stories

Ready to Try Gateway?

See how platform teams use our Kafka proxy to add encryption, multi-tenancy, and traffic control without changing application code.